AI-powered learning

Save this course

Master Explainable AI: Interpreting Image Classifier Decisions

Discover Explainable AI tools to interpret deep learning classifiers. Use saliency maps, activation maps, and metrics to lead the GenAI revolution and future-proof your skills.

5.0

34 Lessons

2 Projects

7h

Join 2.9 million developers at

Join 2.9 million developers at

LEARNING OBJECTIVES

- A deep understanding of the need and benefits of Explainable AI

- The ability to design and implement popular explanation algorithms

- Hands-on experience combining existing explanation methods to generate more robust explanations

- An understanding of explainers used to interpret the decision of a neural network

- The ability to evaluate and quantify the quality of the neural network explanations

Learning Roadmap

1.

Introduction to Explainable AI

Introduction to Explainable AI

Get familiar with Explainable AI to understand and implement transparent, interpretable AI systems.

2.

Saliency Maps

Saliency Maps

Unpack the core of various saliency map techniques to interpret image classifier decisions.

3.

Class Activation Maps

Class Activation Maps

6 Lessons

6 Lessons

Work your way through Class Activation Maps, GradCAM, X-GradCAM, Eigen-CAM, and Ablation-CAM techniques.

4.

Miscellaneous Methods

Miscellaneous Methods

6 Lessons

6 Lessons

Apply your skills to various advanced methods for interpreting AI image classifiers.

5.

Metrics of Interpretability

Metrics of Interpretability

7 Lessons

7 Lessons

Dig into interpretability metrics for AI, feature agreement, rank correlation, predictive faithfulness, and fairness.

Certificate of Completion

Showcase your accomplishment by sharing your certificate of completion.

Complete more lessons to unlock your certificate

Developed by MAANG Engineers

ABOUT THIS COURSE

Explainable AI is a set of tools and frameworks that helps you understand and interpret the internal logic behind the predictions made by a deep learning network. With this, you can generate insights into the behavior and working of the model to mitigate issues around it in the development phase.

In this course, you will be introduced to popular Explainable AI algorithms such as smooth gradient, integrated gradient, LIME, class activation maps, counterfactual explanations, feature attributions, etc., for image classification networks such as MobileNet-V2 trained on large-scale datasets like ImageNet-1K.

By the end of this course, you will understand the need for Explainable AI and be able to design and implement popular explanation algorithms like saliency maps, class activation maps, counterfactual explanations, etc. You will be able to evaluate and quantify the quality of the neural network explanations via several interpretability metrics.

ABOUT THE AUTHOR

Puneet Mangla

Data and Applied Scientist at Microsoft Advertising working on Ad quality checks. As a part-time technical writer, I love teaching machine learning concepts through blogs and courses.

Trusted by 2.9 million developers working at companies

A

Anthony Walker

@_webarchitect_

E

Evan Dunbar

ML Engineer

S

Software Developer

Carlos Matias La Borde

S

Souvik Kundu

Front-end Developer

V

Vinay Krishnaiah

Software Developer

Built for 10x Developers

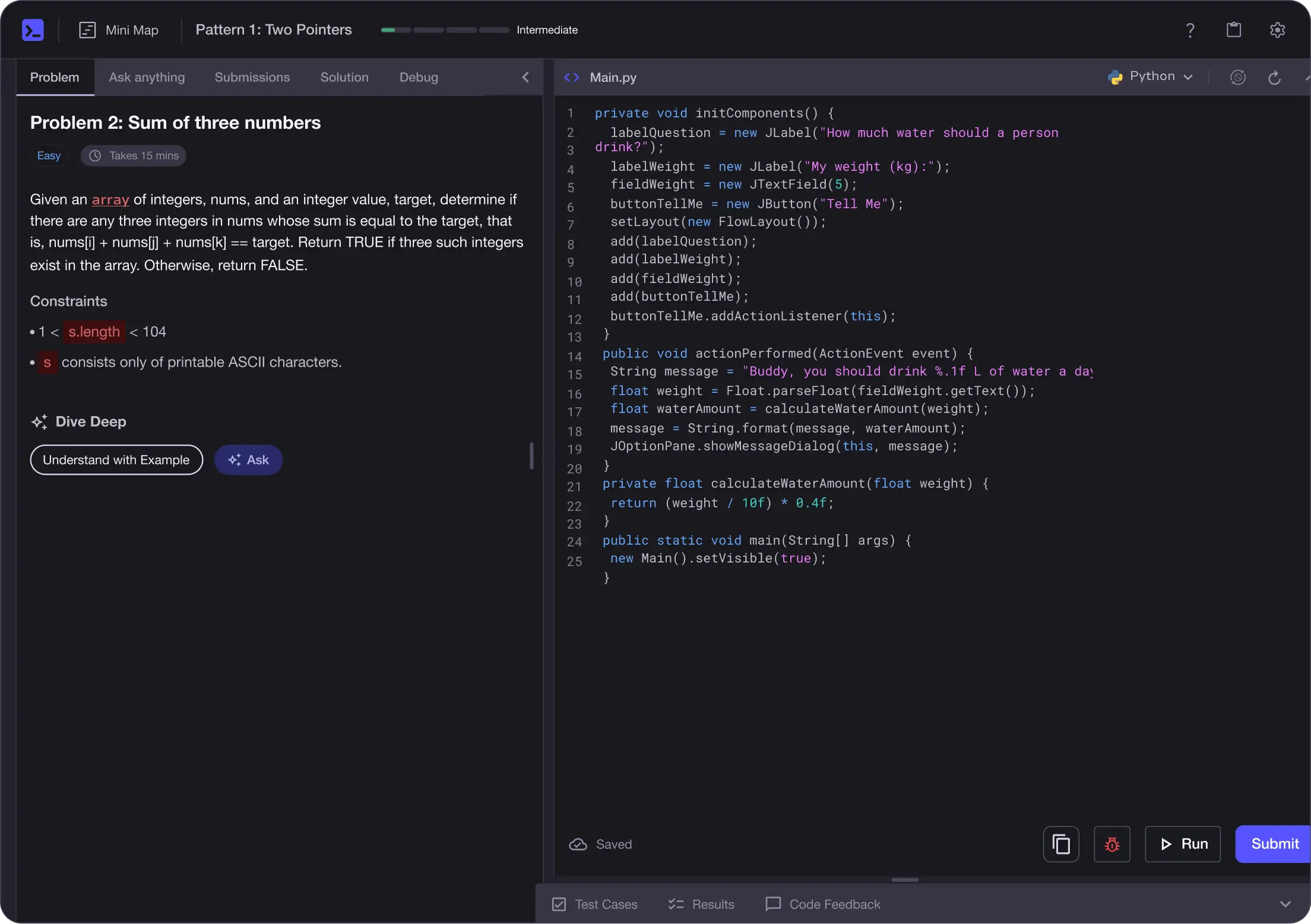

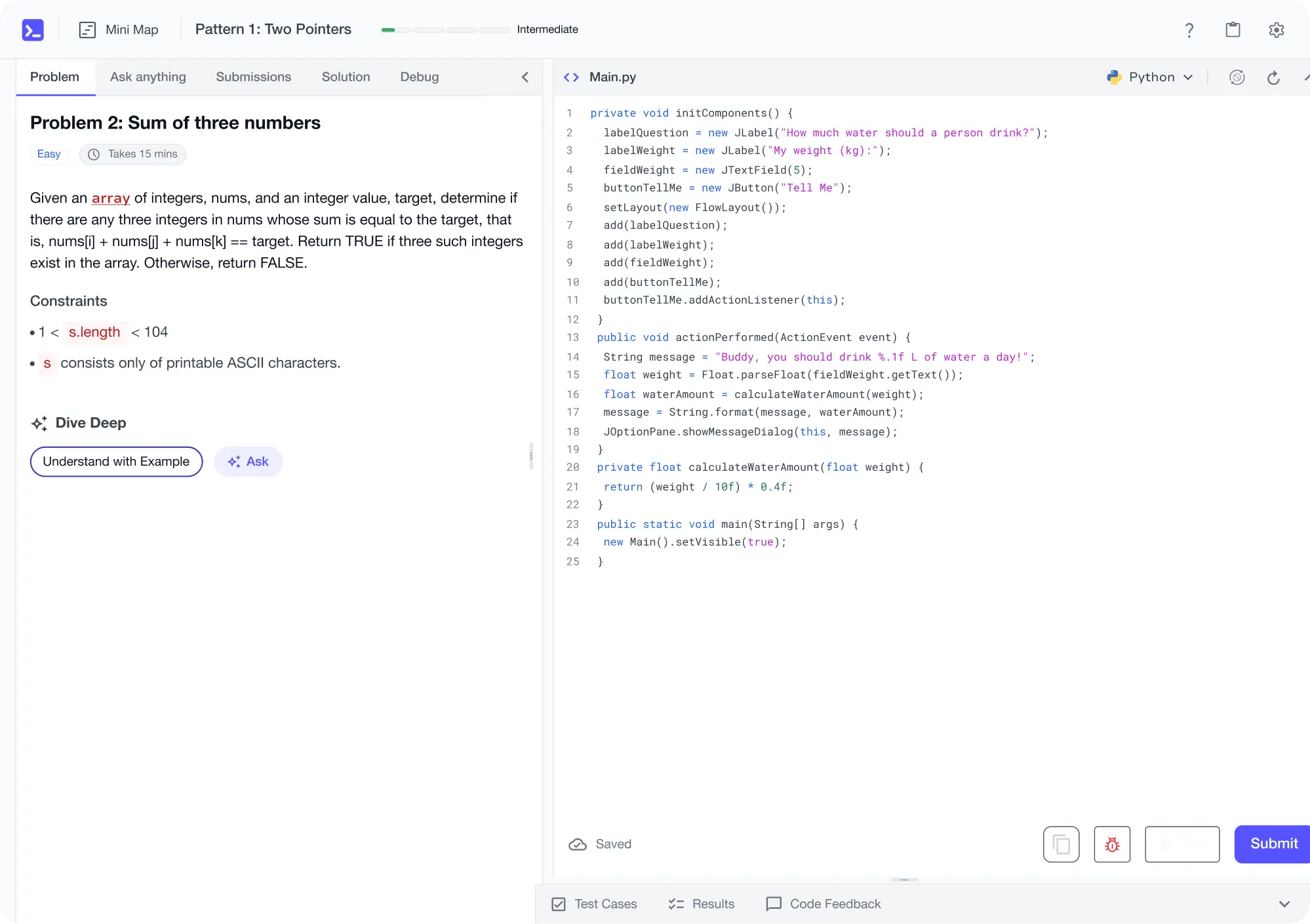

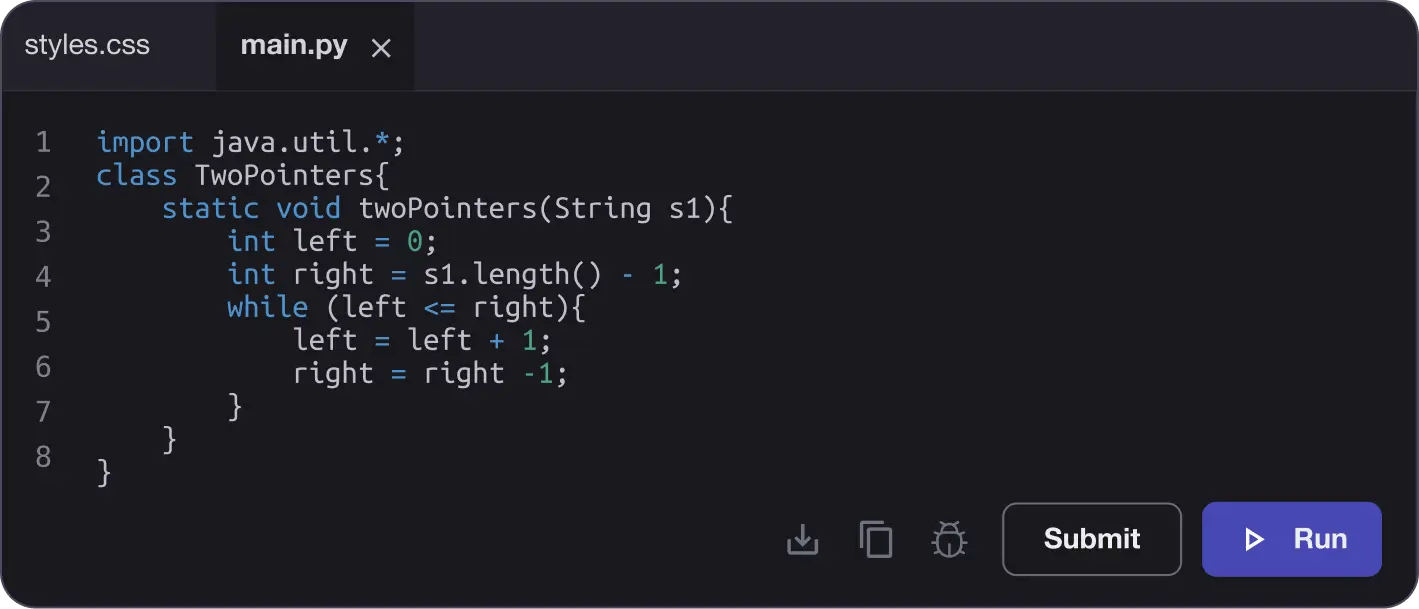

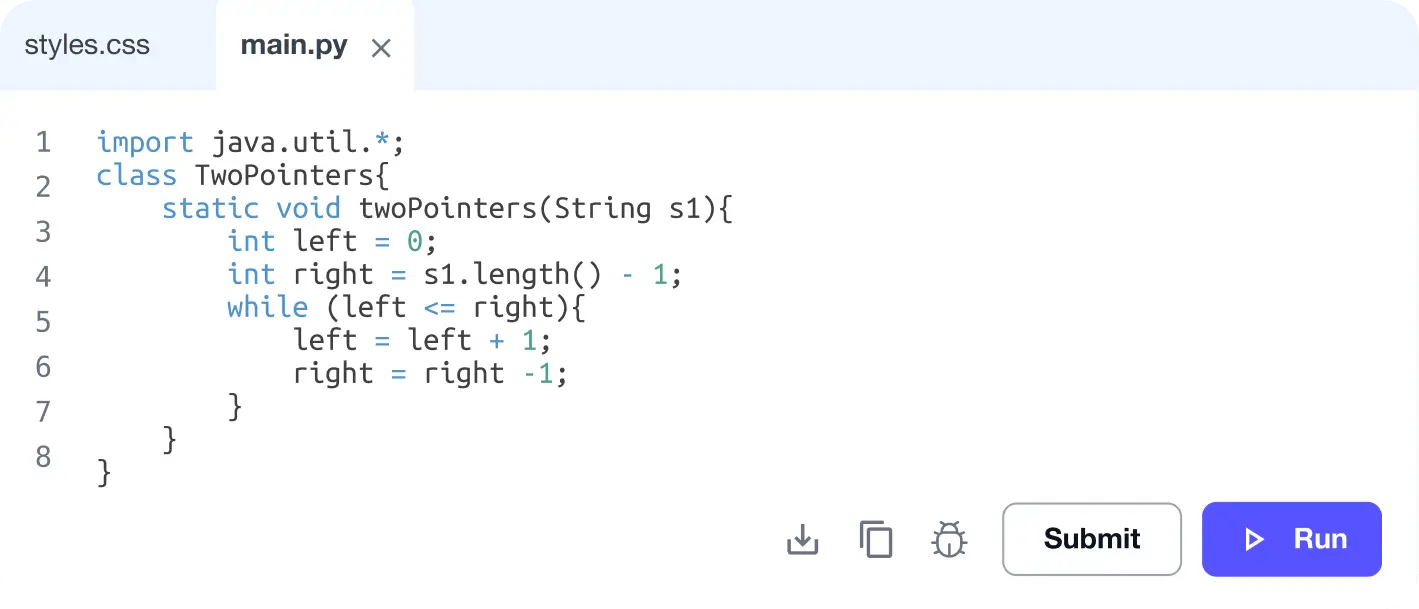

No Passive Learning

Learn by building with project-based lessons and in-browser code editor

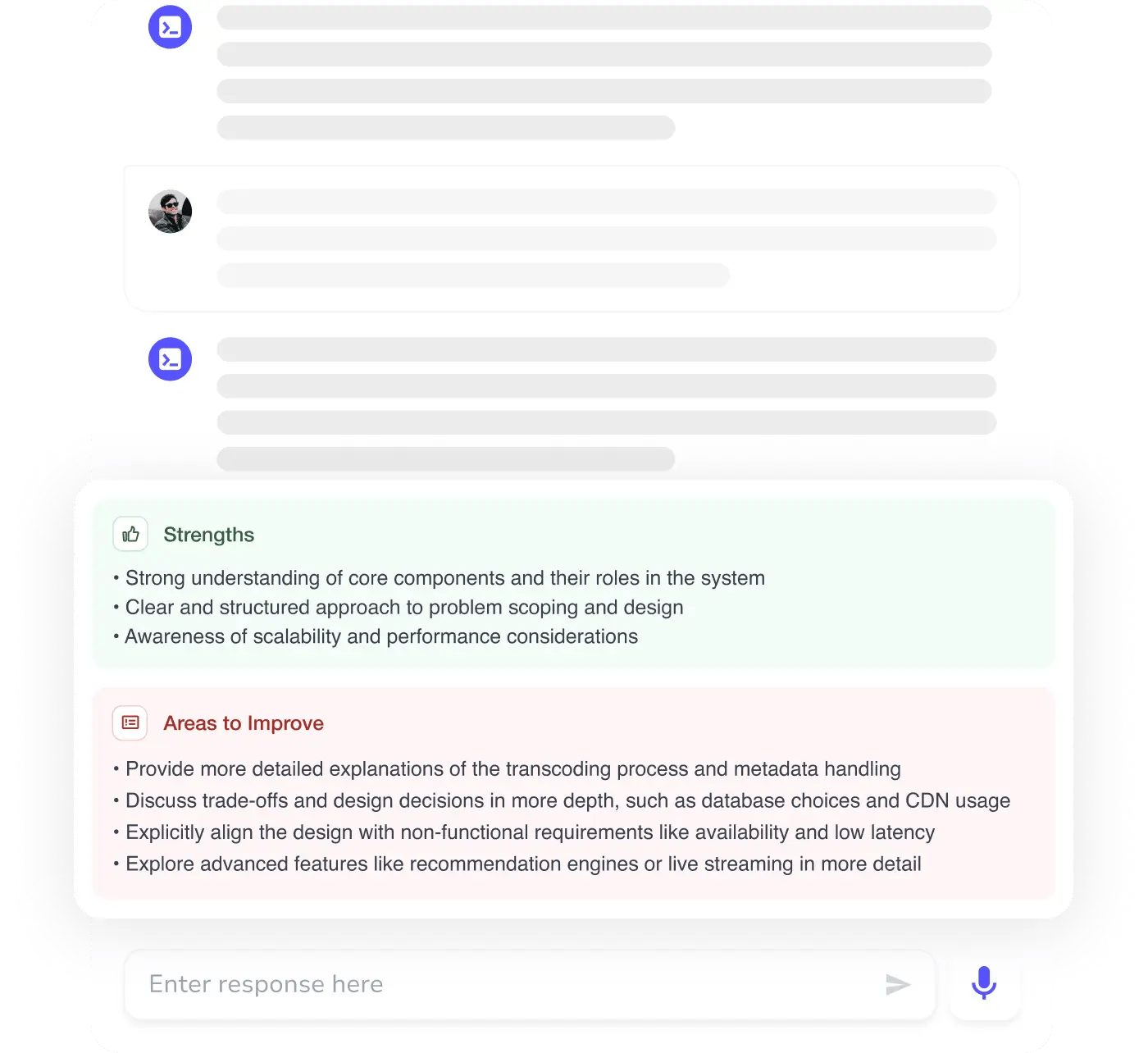

Personalized Roadmaps

The platform adapts to your strengths & skills gaps as you go

Future-proof Your Career

Get hands-on with in-demand skills

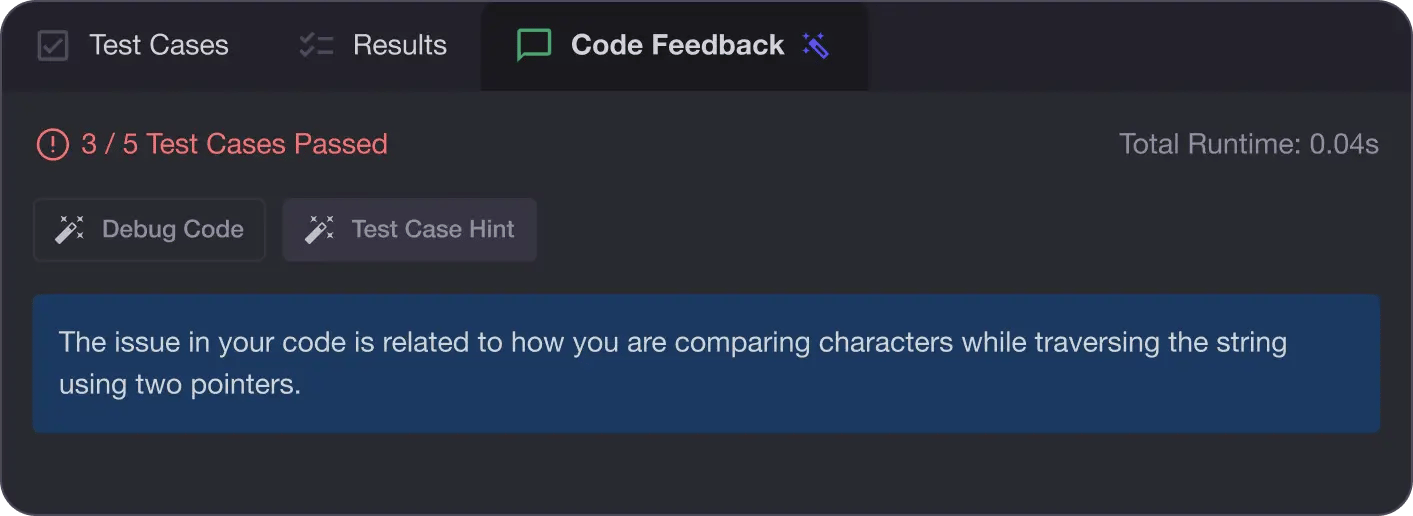

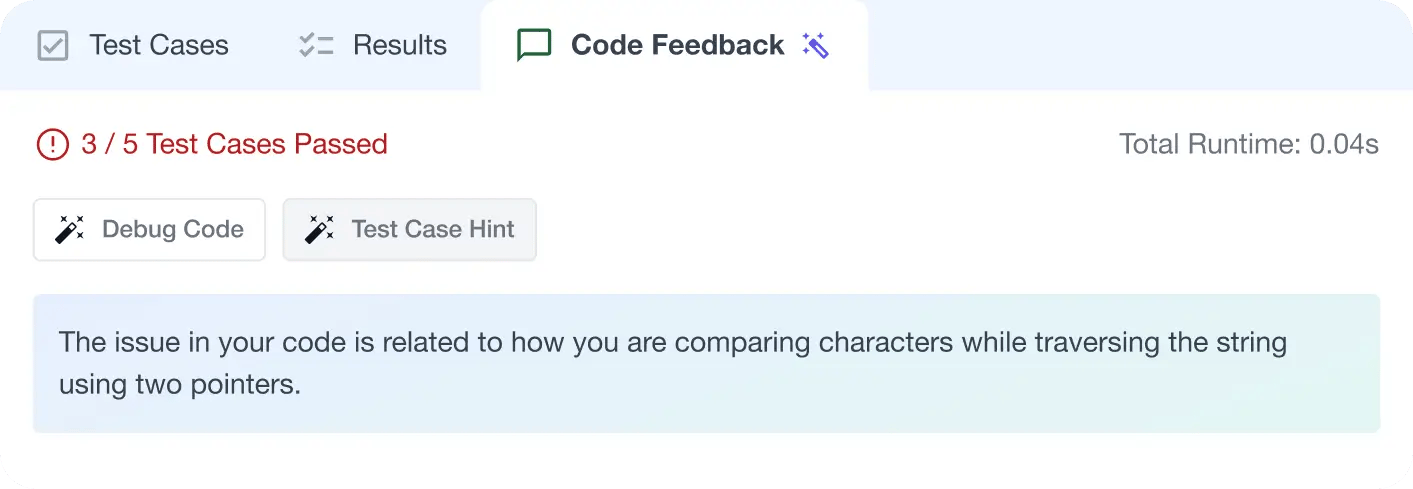

AI Code Mentor

Write better code with AI feedback, smart debugging, and "Ask AI"

MAANG+ Interview Prep

AI Mock Interviews simulate every technical loop at top companies

Free Resources